Most people are asking a recipe book to run a kitchen.

There is a specific kind of frustration that millions of people are experiencing right now with AI, and almost none of them can name what is actually causing it.

They open ChatGPT. They ask it to do something reasonable. Schedule a meeting. Pull live data from a spreadsheet. Monitor a competitor’s pricing page. Run a workflow while they sleep. The tool does not do it. They close the tab and walk away thinking either the technology is overhyped or they are not smart enough to use it properly.

Neither is true.

The tool is not broken. They are using the wrong category of AI for the job. They do not realize there are categories at all.

This is the problem nobody addresses in the breathless coverage of artificial intelligence. The entire conversation is framed as though AI is one thing. You are either “using AI” or you are not. You are either ahead or behind. That binary creates anxiety without producing understanding. It makes people feel they need to “figure out AI” as though there were a single thing to figure out.

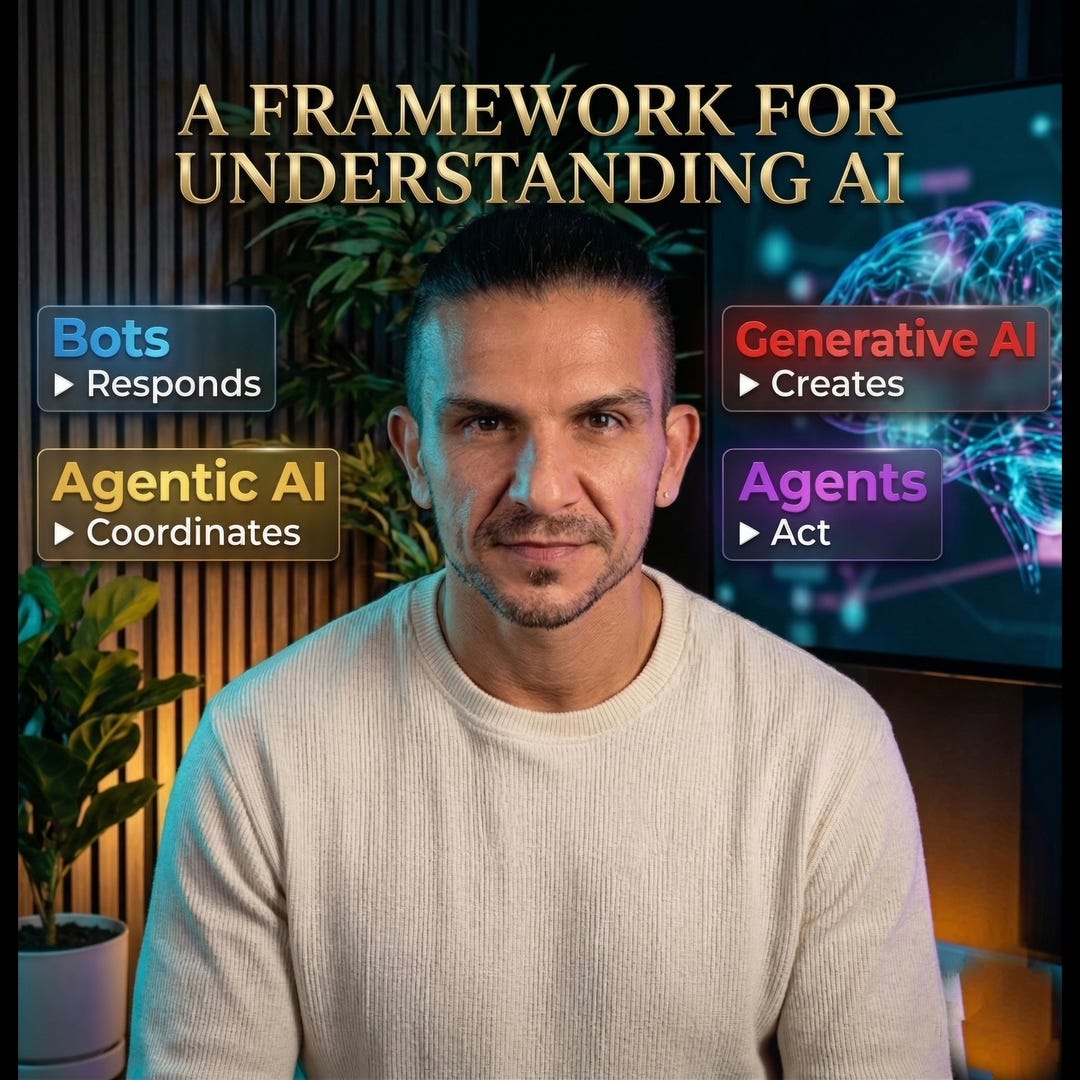

There is not. There are four fundamentally different tools, each designed for a fundamentally different class of problem. Most people have never been told this. So they pick whichever AI product is most popular, apply it to every situation, and conclude the technology is inconsistent when the results are uneven.

The technology is not inconsistent. The application is. Once you see the distinction, a tremendous amount of confusion falls away.

I have been in technology for over 20 years. I currently lead multiple programs developing AI models at Google. I spend my days inside the systems that most people interact with only through a chat window. What I see from that vantage point is that the gap between the people getting real value from AI and the people spinning their wheels is almost never a gap in technical skill. It is a gap in classification.

The people getting results know which tool they need before they open it. The people struggling are asking one tool to do the job of another. That is the entire problem, and it has a straightforward solution.

The simplest way I have found to explain the four categories is through a kitchen.

Imagine a restaurant. There is a recipe book on the counter, an ordering kiosk at the front, a line cook at the stove, and a head chef running the entire service. Each one plays a distinct role. None of them can do the other’s job. The moment you confuse their roles, the kitchen falls apart.

Generative AI is the recipe book. You open it, you ask a question, it gives you an answer. You provide a prompt, it creates something new: a draft, an image, a piece of code. It is powerful in its domain. But it only works when you open it and ask. It never acts on its own. It never reaches out to grab an ingredient. It waits on the counter until you pick it up.

A chatbot is the ordering kiosk. It follows a script. You press a button, it routes you to a predefined response. It does not think. It does not create. It handles a narrow, repeatable interaction with efficiency, and it breaks the moment someone asks it something outside the menu.

An AI agent is the line cook. The line cook can read the recipe book, yes. But the line cook also opens the refrigerator, grabs ingredients, adjusts seasoning based on what is available, and plates the dish. The line cook connects to the actual tools in the kitchen and takes real action. This is the category most people have not fully absorbed yet, and it is where the landscape shifts in a way that changes what is possible for a single person or a small team.

Agentic AI is the head chef. The head chef does not work a single station. The head chef runs the entire service. Delegating tasks to the line cooks, adjusting the menu mid-shift, rerouting orders when an ingredient runs out, learning from last week’s performance to improve tonight’s. The head chef plans, coordinates, and adapts in real time across the whole operation.

Recipe book. Kiosk. Line cook. Head chef.

That is the spectrum. Each role was designed for a different class of problem. The frustration most people feel with AI comes from a single, fixable error: treating the recipe book as though it were the head chef, then concluding the kitchen does not work.

What makes this distinction more than academic is where the boundaries actually sit.

Most people are already familiar with generative AI, even if they do not use that term. ChatGPT, Claude, Gemini, Midjourney. You give it a prompt. It generates something that did not exist before. This is the category that dominates public conversation about AI, and for good reason. It is genuinely transformative for any task that begins with a blank page.

But generative AI is reactive by design. It waits for you. It does not connect to your calendar, your CRM, your email, your database. It cannot monitor anything while you sleep. It cannot update a spreadsheet based on data it gathered from three different sources. It creates. That is the full scope of what it does. Understanding this single boundary prevents the most common source of AI frustration people experience today.

Chatbots occupy familiar territory. The chat window on a customer support page. The phone tree. The FAQ assistant. They have been around for years, long before the current wave. What complicates the picture is that modern chatbots increasingly use generative AI under the hood, which makes them feel smarter. They handle more natural conversation. But they remain structurally limited to responding within defined boundaries. They inform. They do not execute. The moment you need the system to actually do something rather than describe something, you have exceeded what a chatbot was built for.

The real shift happens with AI agents. An agent does not just generate text or follow a script. It connects to your tools and takes action on your behalf. The architecture is different at a foundational level. An AI agent understands your goal, plans the steps to achieve it, executes those steps across your existing software, and verifies whether the result matches what you asked for. That verification loop is what separates an agent from a simple automation. The system checks its own work.

The practical difference is not subtle. You tell an AI agent: research ten potential leads in a specific industry, review their LinkedIn profiles, draft personalized outreach emails, and add them to my CRM. Generative AI would write you a single outreach email if you prompted it well. The agent does the research, writes the emails, and places the contacts in your system. Generative AI talks about doing the work. An agent does the work. The line between these two categories is precise: the moment your task requires connecting to external software and taking real action, you have crossed out of generative AI territory.

Agentic AI extends this further, and it is the newest and most misunderstood category. It is not a single agent performing a single workflow. It is a system of agents that can reason, plan, delegate, and coordinate across multiple tasks with minimal human direction. One agent reads an incoming support email and classifies the issue. Another pulls up the customer’s order history. A third drafts a response. A fourth determines whether a refund is warranted and processes it. An orchestrating agent manages the handoffs between all of them. No human touched any of it.

The key distinctions from a standard agent: agentic AI sets its own sub-goals, breaks complex problems into smaller tasks, delegates those tasks to specialized agents, monitors progress, and learns from outcomes to adjust its approach next time. This is the head chef, not working a station but running the entire operation.

The reason this matters beyond technical curiosity is that the trajectory of this market is not speculative. It is measurable.

The AI agent market reached roughly $7.6 billion in 2025. By 2030, projections place it above $50 billion, growing at approximately 45 percent per year. Gartner projects that by the end of this year, 40 percent of enterprise applications will include task-specific AI agents, up from less than 5 percent just twelve months ago. That pace of integration has no recent precedent in enterprise technology.

The statistic I find most revealing, though, is not a market size number. It is this: roughly 79 percent of enterprises have adopted AI agents in some form. Only 11 percent are running them in production. That 68-point gap between adoption and actual deployment tells you everything about where the landscape really stands. Almost everyone is experimenting. Almost no one has figured out how to execute. The organizations that close that gap first will capture an outsized share of the advantage.

For you, whether you are building a business, refining your workflow, or simply trying to understand where to place your attention, the implication is clear. The tools exist. The confusion about which tool does what is the bottleneck. Classification is the skill that unlocks everything else.

There is a framework I use to decide which category fits any given task. It comes down to two questions.

Does this task require creating something, or does it require taking action?

Is the workflow simple, or is it complex and adaptive?

Creating something with a simple workflow: that is generative AI. Write an email, summarize a document, draft a blog post.

Responding to people with a simple, repeatable workflow: that is a chatbot. Handle the same ten customer questions, route inquiries to the right team, collect information from a form.

Taking action across multiple tools with a defined workflow: that is an AI agent. Research leads and enter them into your CRM, monitor an inbox and draft replies, compile data from several sources into a report.

Coordinating complex, adaptive work across multiple tasks simultaneously: that is agentic AI. Run an entire support pipeline, manage a multi-step content production system, orchestrate employee onboarding across departments.

Two questions. Four answers. That filter will prevent you from spending months building with a tool that was never designed for the job you are asking it to do.

Here is the point I want to leave you with, because it reaches past the tactical.

The anxiety most people feel about AI is not really about AI. It is about disorientation. The landscape moves fast, the terminology shifts constantly, and the public conversation treats it all as one enormous, undifferentiated wave. That framing leaves people feeling they need to either master everything or risk being left behind. Neither is true.

You do not need to build these systems from scratch. You do not need an engineering background. You need to understand the landscape well enough to make good decisions about where your time and energy go. The four categories, the two-question filter, and the kitchen analogy are enough to give you that orientation.

I build AI systems at Google during the day. On nights and weekends, I use these same categories to decide what to build in my own business. The difference between the people I watch struggle with AI and the people I watch get real, compounding results from it is almost never technical ability. It is knowing which tool they need before they open it.

That knowing is what separates someone who is “using AI” from someone who is using AI well. It is the difference between opening a recipe book and running a kitchen.

Now you know which role each one plays.