Four habits to change now that Opus 4.7 is out

Your feed is about to be flooded with Opus 4.7 feature recaps. Skip them.

The feature recap is not the update. The update is a small set of habit changes, and if you miss them, your prompts still work. They just get quietly worse over time, and you will not be able to tell why.

This is the most common pattern I see when a new model ships. People read the release notes, note the benchmarks, maybe try one or two new capabilities, then go back to prompting the way they did with the previous model. On a minor version bump, that is fine. On a release like 4.7, it is costly. The way you get quality out of this model is different enough from the way you got quality out of 4.6 that keeping your old habits is actively working against you.

I have been in technology for 20 years, and I currently lead multiple programs developing AI models at Google. I have been running Opus 4.7 for the last few days on my own client acquisition system, and over that time I have had to retire four specific prompting habits that were invisible friction in my workflow. There is also a fifth change that sits underneath the other four. It is the reason they exist, and once you see it, the individual habits stop feeling like separate tactics and start feeling like one shift.

We will get to that last.

The first habit to change is how you use your opening prompt.

Load it with everything. Task, constraints, acceptance criteria, file locations, relevant context. The entire brief, delivered upfront, in a single turn. A turn, in case you do not use the term, is one exchange: you send a message, the model answers, that is one turn.

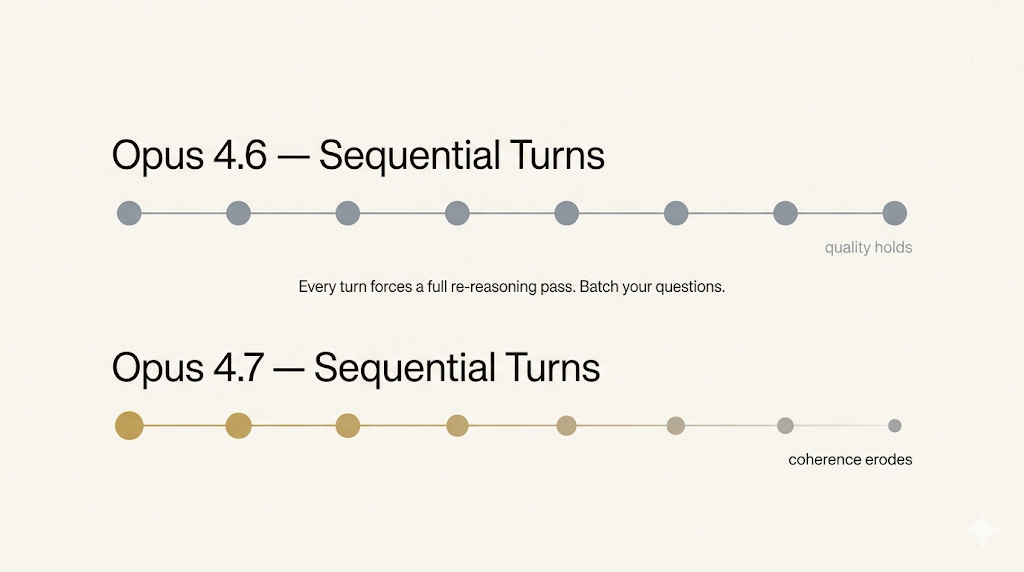

On 4.6 you could get away with opening loosely and tightening as you went. Drop in a task, see what comes back, add constraints when you realized you needed them, correct direction on the next turn. The model absorbed that drift without much penalty. Output quality held up across a dozen turns of conversation.

On 4.7, that pattern starts working against you. The model does more reasoning on every user turn than 4.6 did. Every time you drip in a new constraint mid-conversation, 4.7 re-reasons the entire context from the beginning. The longer the thread runs and the more corrections you stack, the more you erode the quality that would have been there if you had just briefed properly at turn one.

When you adopt this habit, you stop having conversations with the model and start giving it assignments. Your first message becomes a brief instead of an opener. Because 4.7 is strong enough to execute a well-written brief, you get single-turn output that is usable without the back-and-forth you are used to.

If you are refactoring, name the refactor, say what should stay unchanged, list the tests that need to pass, and point to where the files live. If you are designing, give it the constraints and the definition of done. One brief, at the start.

I learned this on my own client acquisition system. Output quality kept dipping around the fifth turn in a conversation, and I could not figure out what was happening. The fix was changing how I opened. A well-briefed model outperforms a well-guided one.

The second habit is the one most people keep getting wrong even after they fix the first.

Batch your follow-up questions. If you have five concerns after reading the first response, send them in a single message, not five separate ones.

This flows from the same reasoning mechanics. Every user turn on 4.7 forces a full re-reasoning pass over the entire conversation. A quick “also, do this” follow-up looks cheap. It is not. Each of those quick follow-ups costs you the same reasoning overhead as a substantive new prompt.

The real cost, though, is not compute or time. It is coherence. When you feed the model five concerns in a single message, it can weigh them together and produce a response that balances all of them. When you feed them one at a time, each response optimizes for the last thing you asked about, and overall coherence slowly erodes. By the time you are on your sixth follow-up, the output has drifted from what the first response was reaching for.

Two or three clean exchanges beats ten or fifteen messy ones. When the first response lands, resist the urge to fire off a quick “one more thing.” Hold your questions, read the output carefully, and send everything you want adjusted in one consolidated turn.

The third habit is a setting change with a disproportionate effect on your output quality. This one only applies if you are working in Claude Code, which is Anthropic’s agentic coding environment. If you are using regular Claude chat or Cowork, you will not see this setting.

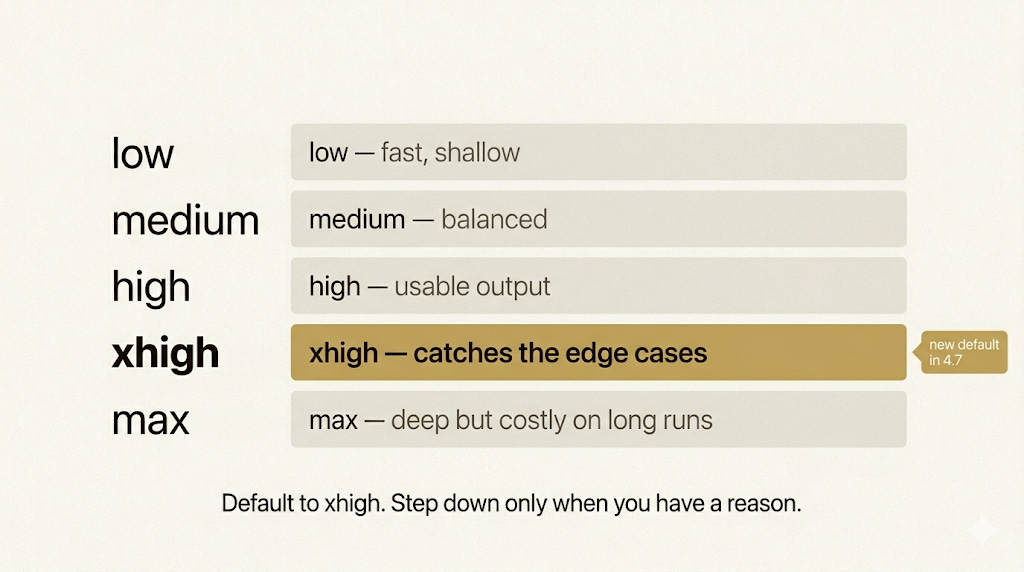

Claude Code lets you set effort levels that tell the model how hard to reason about a task. The options are low, medium, high, xhigh, and max. With 4.7, xhigh is new. It sits between high and max, and it is now the default.

The temptation for most people who upgrade from 4.6 is to port over their old effort setting without thinking about it. Do not. Take the time to experiment with xhigh.

At lower effort levels, the model reasons lightly and answers fast. At max, it reasons as hard as it can, which is sometimes what you want and often overkill. The runaway token usage and latency on long agentic runs at max can be punishing. xhigh gives you the careful reasoning and autonomy you want for serious work, without the cost profile that makes max impractical for sustained use.

xhigh earns its place in the gap between serviceable output and excellent output. High gets you a usable result on most tasks. xhigh catches the edge cases and the subtle bugs you did not think to flag. On anything intelligence-sensitive, like API design, schema migrations, or debugging code you do not fully understand, xhigh is the level where the model actually shows what it can do.

On my own client acquisition pipeline, xhigh caught a production bug in my lead scoring logic that high would have shipped into the world. The difference between those two effort levels was, in that specific case, the difference between shipping a bug and catching one. You can also toggle between levels within the same task if you want to manage token cost on simpler steps while keeping xhigh for the heavy parts.

The fourth habit is about what you stop doing.

If you have been manually setting thinking budgets on 4.6, stop.

On 4.6 you could pass a token budget for reasoning, which was a way of telling the model how much to think before answering. Small budget for simple tasks, larger budget for hard ones. You had to tune it manually for each task type, and getting the tuning wrong meant either sluggish responses on easy problems or shallow responses on hard ones.

That is gone in 4.7. Fixed thinking budgets are no longer supported. Instead you get adaptive thinking, which means the model decides when to reason hard and when to skip reasoning entirely.

In practice, the model will fly through a simple lookup and go deep on a complex refactor, and it is better at making that call than you are. You stop managing the model’s thinking. You manage what you ask it to do.

If you want to push the model toward more thinking on a hard problem, just tell it directly. Something like “think carefully and step by step, this is harder than it looks.” If you want it to stop overthinking a simple lookup, tell it to prioritize a quick response over deep reasoning. Plain language, no settings panel.

The setup time you used to spend tuning budgets is gone. The decisions you used to make about whether a task deserves deeper reasoning are gone. What remains is the work itself. The model knows when to think harder. That is its job now, not yours.

Now the fifth shift, which is the one I told you we would get to at the end.

It is not a habit so much as a mindset update that sits underneath the other four. Once you see it, the individual tactics stop feeling like a list of tips and start feeling like one change expressed in four different surfaces.

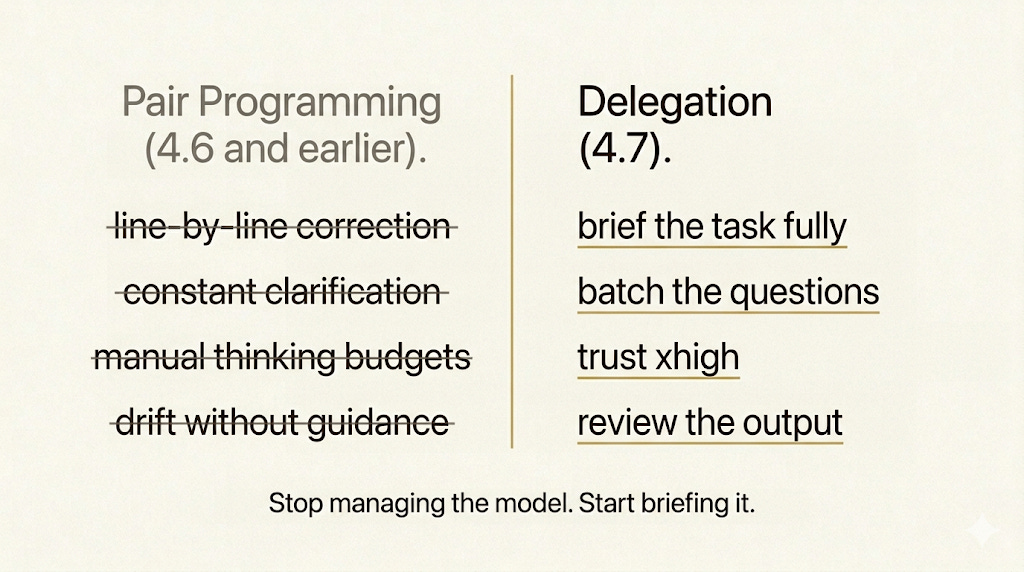

The shift is this: you are no longer pair programming with the model. You are delegating to it.

Pair programming means sitting next to someone and guiding them step by step. You explain, they type, you correct, they adjust. The person next to you needs your hand on the wheel or they drift. Delegation is different. You brief someone capable, you trust them to execute, you review the result. Both are real working modes. They demand different skills from the person doing the delegating.

Earlier models were pair programmers. They needed your hand on the wheel because they would drift without it. Line-by-line correction, constant clarification, stepwise guidance, those habits made sense for those models because those models needed them.

4.7 does not need that kind of oversight for most of a task. It is capable enough to be briefed and left alone to execute. Every one of the first four habits I walked through is a symptom of this bigger update. Loading the first turn, batching your questions, using xhigh, trusting the adaptive thinking. Those are all things you would do naturally if you were delegating to someone capable. They only feel like new habits because the old pair-programming reflexes are still in your hands.

When you make the switch, cycle time drops. Output quality climbs. The work stops feeling exhausting in the way that constant oversight always feels exhausting. You are not managing the model anymore. You are briefing a capable operator and reviewing the output.

There is a test you can run on your own workflow tomorrow.

Open your next Claude Code session and watch how you are interacting with the model. If you are correcting, clarifying, or re-explaining your way through a task, you are still in pair-programming mode. If you are writing a proper brief at the start and reviewing the output at the end, you have made the switch.

The people I see getting compounding value out of each model upgrade are the ones who update their working model when the tool updates. The people who stay stuck are the ones who treat every release as a marginally better version of what they already had, and keep prompting the way they always have.

Briefing is the skill. Reviewing is the skill. Knowing what to hand off and what to keep is the skill. The model is catching up to a level of capability where the bottleneck is no longer the model. It is whether you know how to work with something capable.

Load the first turn. Batch the follow-ups. Default to xhigh. Let the model manage its own thinking. And stop pair programming with something that no longer needs it.

That is the whole update. See you in the next one,

Guney